AGI: We Almost There? Does it Matter?

AGI: Are We Almost There?

Why That’s the Wrong Question to Ask

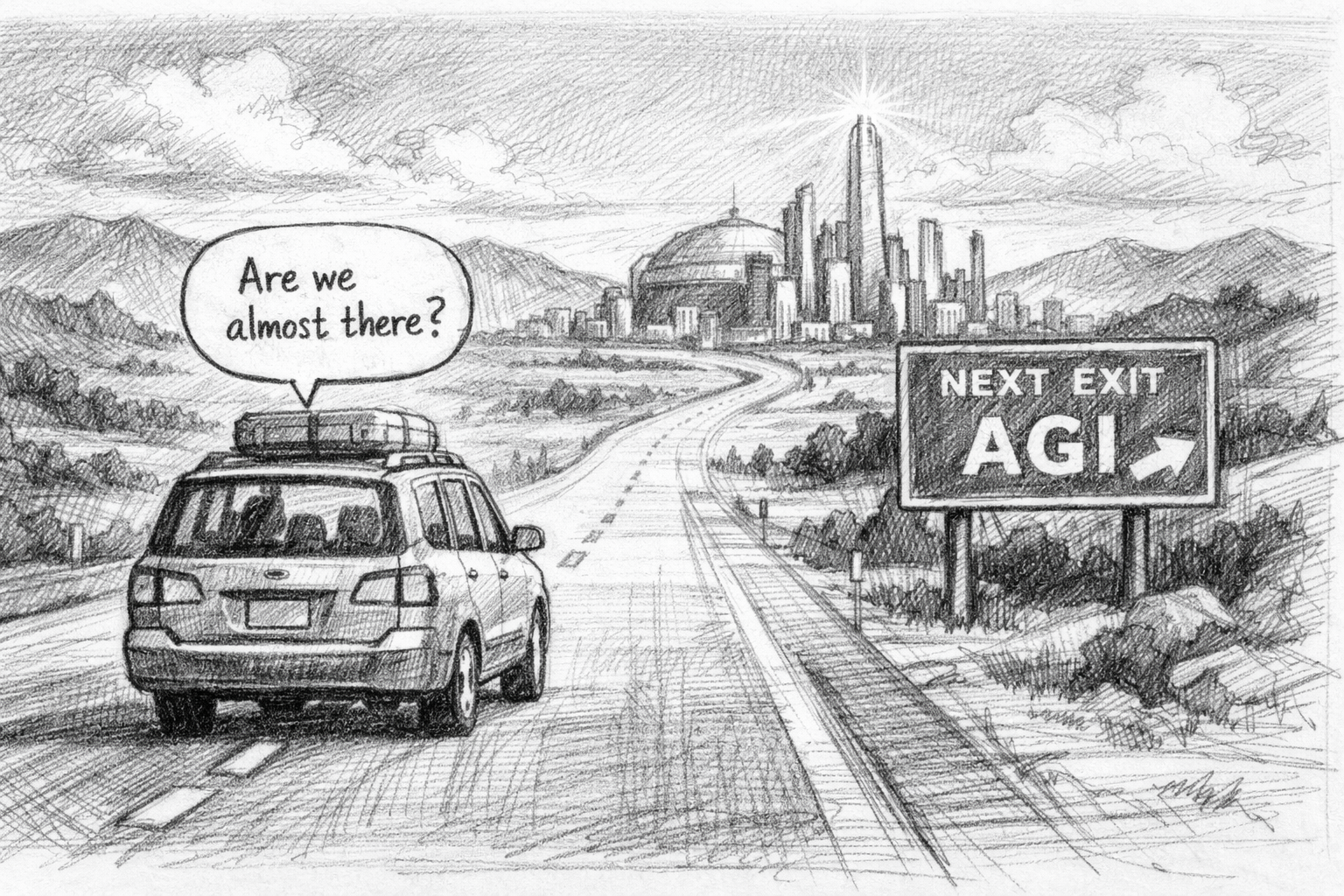

When I was young, my family would pile into our GMC truck and head across the country. In the pre-cellphone era, there wasn’t much for kids to do except stare out the window and think, or read a book. I loved the feeling of movement and uncertainty of what lay ahead. I also now appreciate how those trips trapped our family together for hours where we could talk about anything and everything. Sometimes disagreements broke out. Sometimes we had magical moments of shared insight. In the end, we always got to our destination together.

Inevitably, as kids do, one of us would get impatient and ask: “Are we almost there?”

This became a family joke. As soon as we pulled off the driveway, someone would say it—“are we almost there?"—as if asking the question would get us to our destination faster. What it actually did was get that impatience out of the way, so we could settle into the conversations that made the trip worthwhile.

I’ve been thinking about this lately because the tech world keeps asking the same question about Artificial General Intelligence (AGI). Are we almost there? will it be next month? Six months away? Two years? Five? The predictions multiply, each one treating AGI like a finish line we’re racing toward. As if a switch will be thrown and suddenly the world will be a different place.

But here’s what I’ve come to believe: “are we almost at AGI?” might be the wrong question entirely.

The Intelligence Measuerment Trap

Our fascination with AGI is peculiar given that we don’t really know what intelligence is.

When I was studying Cognitive Science in school, it often surprised me how concepts like “intelligence” were so commonly used yet so poorly defined. We throw the word around as if it refers to something precise and measurable, but the closer you look, the fuzzier it gets. Is intelligence the ability to solve novel problems? To learn quickly? To reason abstractly? To adapt to new situations? All of these? Something else?

Tradtionally, the tests we’ve created to measure intelligence—IQ tests, SATs, even the Turing test—are really designed to differentiate between humans. Flawed as they may be, they attempt to measure where you fall on a distribution of human knowledge or cognitive abilities. We don’t test whether you know how to breathe, or whether you’ll blink when an object flies toward your face. We don’t consider these to be “intelligence” because all humans can do them. They’re not differentiating.

But AI can’t do some of these things that we take for granted in any human. A toddler understands object permanence and basic physics in ways that confound sophisticated AI systems. Yet that same AI can diagnose diseases, write code, and beat grandmasters at chess—tasks we’ve traditionally considered markers of high intelligence.

This is the puzzle: AI is an alien intelligence. It doesn’t map onto our human-centric categories. Measuring it by our standards is like measuring a fish’s intelligence by how well it climbs trees. Since we cannot measure this alien intelligence by human-centric metrics (AGI), we should instead measure its growth by its acquisition of concrete capabilities—a task-by-task approach.

The Threshold That Isn’t

There’s a seductive narrative that when we reach AGI, everything changes. A threshold is crossed. The before-times end and the after-times begin.

But this framing obscures what’s actually happening. The world changes every time AI gains a new capability. When AI could beat humans at chess, that mattered. When it could recognize faces, that mattered. When it could generate images from text, or write passable code, or engage in nuanced conversation—each of these shifted what was possible. Each created new automations, new economic pressures, new opportunities and disruptions.

We didn’t need to wait for “general” intelligence for any of this. The changes are happening now, capability by capability, task by task.

If there are thresholds that matter, they’re not the abstract threshold of “AGI.” They’re the concrete thresholds of specific capabilities: When can AI do this particular task better than a human? When can it do that one? These are the questions that have practical implications for how we work, what we build, and how we organize our lives.

Task Displacement, Not Job Displacement

This reframe—from abstract intelligence to concrete capabilities—changes how we should think about AI’s impact on work.

The common question is: “Will AI take my job?” But a job is really a bundle of tasks. And AI doesn’t take over bundles wholesale; it becomes capable of specific tasks, one at a time, at different rates.

FOr example, a lawyer’s job may include legal research, document drafting, client counseling, courtroom advocacy, and strategic judgment. AI might become excellent at research and drafting while remaining poor at reading a client’s emotional state or making judgment calls under uncertainty. The job doesn’t disappear—it transforms. The mix of tasks shifts. The skills that matter change.

This is why “job displacement” is too blunt a concept. “Task displacement” is more precise and more useful. Which specific tasks can AI now do? Which can it do better than humans? Which still require human judgment, presence, or accountability?

When you analyze work at the task level, the picture becomes more nuanced. Yes, some jobs will shrink or vanish as AI absorbs most of their constituent tasks. But many jobs will persist in altered form, with humans focusing on the tasks that remain distinctly human—at least for now.

This doesn’t make the disruption less real. Fewer people may be needed even when jobs don’t disappear entirely. But it gives us a more honest framework for thinking about adaptation. Instead of asking “is my job safe?” you can ask “which of my tasks are safe, and how do I build expertise in those?”

The Destination We Can’t Quite See

Back to the road trip.

As a child, I often didn’t really understand the destination we were headed for. Even if I knew we were going to my grandparents’ house, there was so much uncertainty in my mind. Would they have ice cream for us? Would my cousins be there? What would we do? The destination wasn’t as clear as “grandparents’ house” implied.

The same is true now. We know we’re heading down the highway at high speed toward a future with a lot more AI. But the specifics of that destination remain uncertain, even to the experts confidently making predictions. Will AI systems become generally capable? Probably, in some sense, eventually. But what that means, what it feels like to live in that world, what it implies for how we work and relate to each other—none of that is knowable in advance.

What I remember most about those family road trips isn’t the destination. It’s the conversations along the way. The arguments about nothing. The deep talks that emerged from boredom. The shared experience of uncertainty and anticipation.

What To Do While We’re In The Car

So here we are, packed into this metaphorical vehicle together, hurtling toward a future none of us fully understand. We can keep asking “are we almost there?"—debating timelines, predicting breakthroughs, treating AGI as a finish line that will clarify everything once we cross it. Or we can settle into the ride and focus on what we can actually do.

Here’s what I think that looks like:

Stop obsessing over AGI. Whether we’ve achieved it, when we’ll achieve it, what the exact definition is—these questions generate more heat than light. The world is already changing. The capabilities that matter are already here or emerging. Arguing about whether the current systems count as “truly intelligent” is a distraction from the more practical question of what they can do.

Pay attention to tasks, not abstractions. Instead of asking “is AI intelligent?” ask “what tasks can AI now perform?” This is measurable, practical, and actionable. Track the capabilities as they emerge. Notice which tasks in your own work are becoming automatable. This is where the real information is.

Find the tasks where humans and AI can work together. Some tasks are easy for AI and hard for humans. Some are the reverse. The most interesting space is where human and machine capabilities complement each other—where AI handles volume and pattern-matching while humans provide judgment, creativity, and contextual understanding. Finding those complementary pairings is a more useful project than fighting over territory.

Reconsider what “intelligence” means for you. Howard Gardner’s work on multiple intelligences is worth revisiting here. He argued that intelligence isn’t a single thing—it includes linguistic, logical-mathematical, spatial, musical, bodily-kinesthetic, interpersonal, and intrapersonal dimensions, among others. But even Gardner’s framework is still fairly abstract. The tasks view is more practical: each of these “intelligences” really represents clusters of tasks that humans can perform. Interpersonal intelligence is the ability to read a room, build trust, navigate conflict, comfort someone in distress. Bodily-kinesthetic intelligence is the ability to perform surgery, dance, or fix a leaky pipe. A physical therapist’s ability to ‘read’ the micro-expressions of a patient in pain, or a construction manager’s skill in navigating dozens of human egos to stay on schedule, are clusters of ‘interpersonal’ and ‘contextual’ tasks that remain profoundly human. Many of us in knowledge work have built our identities around the logical and linguistic tasks—exactly where AI is advancing fastest. If you’ve spent your life feeling valuable because of your “smarts,” it might be worth asking: which tasks do I perform well that AI still struggles with? That’s a more useful question than “am I intelligent enough to stay relevant?”

Understand that you’re in the car. There’s a natural impulse to resist this change, to fight it, to insist that AI will never really be able to do what humans do. I understand that impulse. But the car is moving. I don’t know how to stop it, and I’m not sure we should want to. The more productive stance is to accept that we’re on this journey and focus our energy on making the trip and the destination as good as they can be. That means engaging with AI tools rather than dismissing them. It means adapting rather than resisting. It means having honest conversations about where we’re headed while we still have time to influence the direction.

Conduct a Personal Task Audit.“Take one hour this week to list the 10 core tasks that make up your job. For each one, ask: Can a current AI tool perform 80% of this right now? This exercise immediately shifts your perspective from the abstract debate about AGI to a practical assessment of your own daily work. It’s the single most effective way to identify where to adapt and build your new expertise.

The question “are we almost there?” is a child’s question—impatient, focused on arrival, missing the richness of the journey. I asked it too, many times. But the best parts of those road trips happened after we got that question out of our systems and settled into the ride.

Maybe it’s time to do the same with AGI.

Questions for the Road

- What AI capabilities are already affecting your work or life, regardless of whether we’ve reached “AGI”?

- Which of your tasks feel most vulnerable to automation? Which feel most distinctly human?

- What forms of intelligence—beyond the logical and linguistic—do you possess that might become more valuable?

- If you could shape the destination—the world we’re heading toward—what would you want it to look like?

What do you think? I’d love to hear your thoughts.

Comments